Agent Washing Is the New Cloud-Washing: How to Spot Fake AI Agents Before They Drain Your Budget

Agent washing is the practice of rebranding existing chatbots, RPA scripts, and basic automation tools as "AI agents" to command premium pricing and ride the agentic AI hype wave. Of the thousands of vendors now claiming to sell AI agents, Gartner estimates only about 130 are building genuinely agentic systems. The rest are selling you a chatbot in a trench coat. And it's costing enterprises billions.

Why I Started Paying Attention to This

I've been doing fractional CTO work for years now, and over the last 6 months I've watched the same pattern repeat across multiple startups and scaleups I advise. A founder comes to me and says: "We bought this AI agent platform. It was supposed to automate our [customer support / code review / lead qualification]. It doesn't do any of that. It just... chats."

Every time, same story. They spent somewhere between 30 and 80 grand on an "agentic AI solution" that turned out to be a chatbot with a fancy dashboard. The vendor used all the right words - autonomous, agentic, multi-step reasoning, tool use. The demos looked incredible. Then reality hit.

This isn't a niche problem. The agentic AI market hit $10.86 billion in 2026, and a significant chunk of that money is being lit on fire by companies buying repackaged chatbots. Gartner predicts 40% of agentic AI projects will be cancelled by end of 2027 - and the root cause, more often than not, is agent washing.

5 Myths Agent-Washed Vendors Sell You

Myth 1: "Our AI Agent Is Fully Autonomous"

No current AI agent is fully autonomous in enterprise contexts. Full stop. If a vendor tells you their agent can run completely on its own with human-level decision-making, you're being sold a fantasy. Real agentic systems operate within defined guardrails. They need human oversight for high-stakes decisions. They escalate when they hit edge cases they haven't been trained on.

The 12% of agent pilots that actually make it to production share a specific operating profile: named ownership, scoped success criteria, automated evaluation, and the organisational stomach to ship and roll back without treating either as a verdict. That's the opposite of "fully autonomous" - it's carefully supervised autonomy within tight boundaries.

Myth 2: "It Learns and Adapts Over Time"

Most agent-washed products have zero persistent memory. Every interaction starts fresh. You ask it something on Monday, it has no idea what you asked on Friday. That's not an agent - that's a stateless API call with a chat interface bolted on.

Real agents maintain context across sessions. They remember your preferences, past decisions, ongoing projects. When I evaluate an "agentic" platform for a client, persistent memory is the first thing I test. It's also the easiest way to spot a fake. Ask the vendor: "Show me how this agent uses context from three weeks ago to inform today's decision." Watch them squirm.

Myth 3: "It Handles Multi-Step Workflows Autonomously"

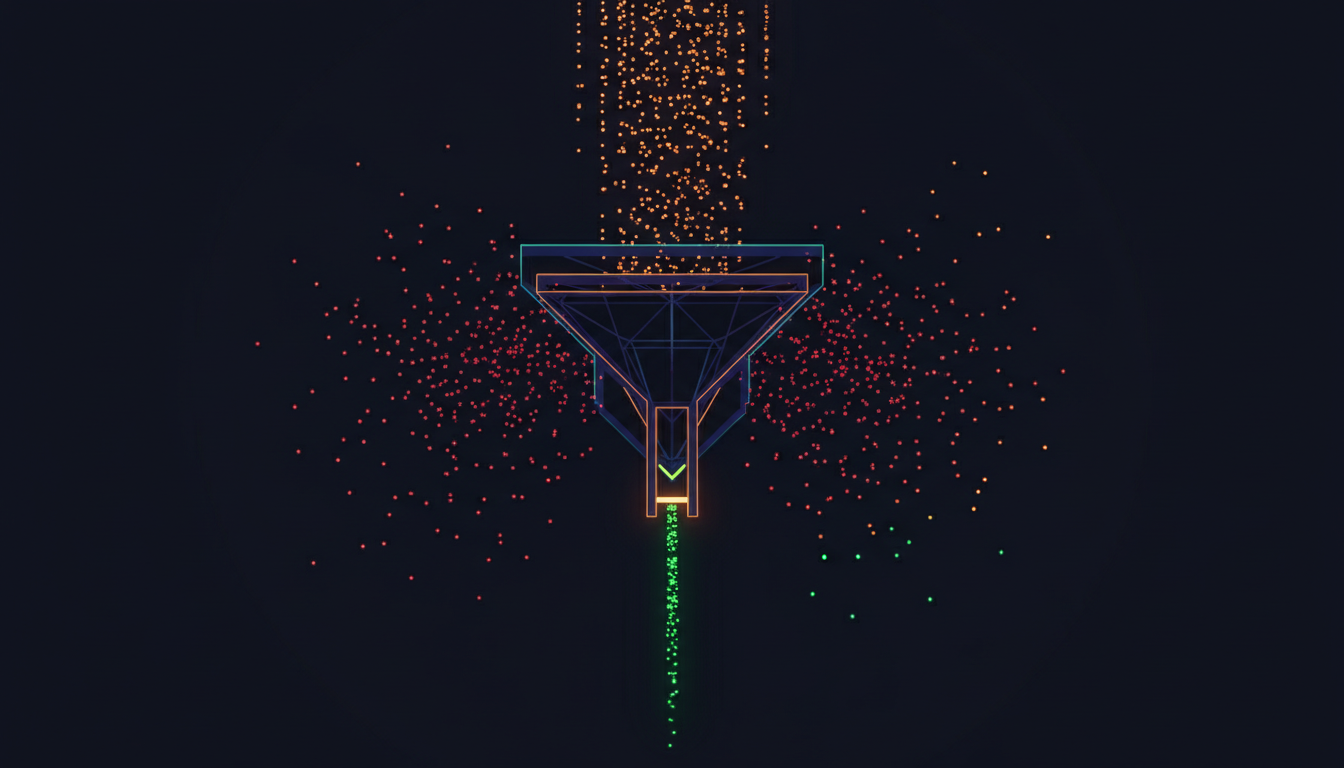

This is the big one. The defining characteristic of agentic AI is autonomous execution of multi-step tasks. You assign a goal, the agent figures out the steps, executes them, handles edge cases, recovers from failures.

What agent-washed products actually do: they present you with a step, wait for you to approve it, present the next step, wait again. That's a wizard interface, not an agent. It's the same thing as a well-designed form with conditional logic - except it costs 10x more and has a "powered by AI" badge.

A real agent makes 8-15 internal API calls per user request - reasoning, planning, executing tools, evaluating results, iterating. A chatbot makes one LLM call. Ask the vendor about their typical API call chain per task completion. If they can't answer, that tells you everything.

Myth 4: "We Integrate With Everything"

Agents that can only chat but can't connect to your email, CRM, database, or codebase aren't agents. They're conversation engines. When I hear "we integrate with everything," I immediately ask for the integration list. Often it's a handful of pre-built connectors plus a "coming soon" roadmap that's been "coming soon" for 12 months.

Real agentic systems need to touch real infrastructure to execute real tasks. They send emails. They update records. They trigger deployments. They query databases. If the vendor's "agent" can only read and respond to text, you're paying agent prices for chatbot capabilities.

Myth 5: "Our Platform Is Enterprise-Grade"

"Enterprise-grade" in the agent-washing vocabulary usually means "we added SSO and an audit log." It doesn't mean they've solved governance, security, cost control, or compliance.

Trust and vendor lock-in define every enterprise agentic AI decision in 2026. Real enterprise-grade agentic AI includes: proper access controls (what can the agent read, write, delete?), audit trails (every decision logged with reasoning), kill switches (immediate human override), cost guardrails (token budgets, API call limits), and compliance alignment (EU AI Act, GDPR, industry-specific regulations).

If the vendor can't walk you through their governance stack in detail, they haven't built one. And without governance, deploying an agent in production is irresponsible.

The Real vs Fake Agent Comparison

I've put together this table from evaluating dozens of "agentic" platforms across my fractional CTO engagements. Use it as a quick filter.

| Capability | Agent-Washed Product (Chatbot in Disguise) | Real Agentic AI System |

|---|---|---|

| Task execution | Responds to prompts one at a time | Plans and executes multi-step tasks autonomously |

| Memory | Stateless - every session starts fresh | Persistent memory across sessions and contexts |

| Tool use | Text generation only (or 1-2 basic integrations) | Connects to real systems: APIs, databases, email, code repos |

| Error handling | Crashes or returns generic error messages | Retries, tries alternatives, escalates when genuinely stuck |

| API calls per request | 1 LLM call per interaction | 8-15 internal calls (reasoning, planning, execution, evaluation) |

| Governance | "We added SSO" | Access controls, audit trails, kill switches, cost guardrails |

| Proactive behaviour | Only responds when prompted | Monitors, flags issues, acts on schedules without prompting |

| Demo vs production | Impressive demo, disappointing reality | Consistent performance with reference customers at 6+ months |

| Cost structure | Flat subscription hiding limited usage | Usage-based pricing reflecting actual compute and API costs |

| Typical enterprise cost | 30-80k/year for glorified chat | Variable, but ROI-justified with measurable automation rates |

The CTO's 7-Question Agent Evaluation Checklist

When I evaluate agentic AI platforms for clients, these are the seven questions that separate real from fake in about 30 minutes.

1. "Show me a multi-step task completing without human intervention." Not a scripted demo. A live task with real data. If every step needs approval, it's a wizard, not an agent. You want to see it reason through unexpected situations.

2. "How does this agent use context from three weeks ago?" Ask for specifics about their memory architecture. "Conversation history" isn't good enough - you need cross-session, cross-context persistence. Can it reference a decision it made last month when facing a similar situation today?

3. "What systems does it actually connect to right now - not on your roadmap?" A list of "coming soon" integrations is a red flag. Real agents ship with real integrations. Ask to see the integration working, not a slide about it.

4. "Walk me through what happens when the agent fails mid-task." Listen for specifics: retry logic, alternative approaches, escalation paths, state recovery. If they handwave, they haven't built failure handling. And failure handling is where the real engineering is.

5. "What's the typical API call chain per completed task?" This is the technical litmus test. A chatbot: 1 call. A real agent: 8-15 calls across reasoning, planning, tool execution, result evaluation, and iteration. If the vendor can't answer this with specifics, they don't have an agent.

6. "Can I speak with a customer who's been running this in production for 6+ months?" Agent washing falls apart over time. The real products have reference customers who can speak to sustained performance, actual automation rates, and real ROI. No references at 6 months? Walk away.

7. "What's your governance model for agent access, costs, and compliance?" If the answer is vague or focuses only on SSO, the platform isn't enterprise-ready. You need: role-based access controls, per-agent token budgets, decision audit logs, human override mechanisms, and compliance documentation.

Why This Matters for Technical Due Diligence

I do a lot of technical due diligence for investors and VCs. In the last quarter, I've started seeing something new: startups listing "agentic AI capabilities" in their pitch decks and technical architecture docs. Investors are asking me to validate these claims.

And increasingly, what I find is agent washing all the way down. The "agent" is a wrapper around the OpenAI API with some prompt templates. The "autonomous workflow engine" is a Zapier clone with an LLM step. The "multi-agent orchestration platform" is three chatbots in a for-loop.

If you're a founder preparing for tech DD and you've built something genuinely agentic, document the architecture properly. Show the reasoning chain. Show the tool use. Show the persistent state management. Show the governance layer. Because if you can't distinguish your system from an agent-washed product, the DD reviewer won't either.

And if you're an investor - independent technical due diligence is the only way to cut through agent-washing claims. A non-technical partner reviewing a pitch deck cannot tell the difference between genuine agentic architecture and a well-designed chatbot. This is exactly the kind of assessment where you need someone who's built these systems, not someone who's read about them.

What to Actually Do Instead

So you need AI automation in your business. What do you do when the market is flooded with agent-washed products?

Start with the problem, not the vendor. Define exactly what you need automated. Map the multi-step workflow. Identify where human judgment is non-negotiable and where it isn't. Then evaluate vendors against that specific workflow - not against their marketing claims.

Run a paid proof-of-concept before committing. Any vendor confident in their product will agree to a 2-4 week paid POC against your actual workflow with your actual data. If they insist on a 12-month contract upfront, that's a signal. Real agents demonstrate value quickly because the automation is measurable.

Bring technical oversight to the evaluation. This is where a fractional CTO earns their keep. Having someone who understands the difference between a stateless API wrapper and a genuine agentic system will save you 50k+ in wasted vendor spend. I've done this evaluation dozens of times - it typically takes 2-3 days to assess a vendor properly and saves months of disappointment.

Consider building before buying. For many workflows, a well-architected system using Claude Code, LangGraph, or CrewAI - with proper tool definitions, memory management, and governance - will outperform a 60k/year agent-washed platform. The key is having structured AI adoption - not vibe-coding an agent together and hoping for the best.

The 130 Number Is Probably Generous

Gartner says 130 vendors are building genuine agentic systems. In my experience, even that number is generous. Some of those 130 have real agentic capabilities in their roadmap but not yet in production. Some have agentic features for one narrow use case but market themselves as general-purpose platforms.

The analysis of 847 AI agent deployments found 76% experienced critical failures within the first 90 days. And 88% of AI agents never make it to production at all. Those numbers aren't because agentic AI doesn't work. It works brilliantly when built and deployed properly. The numbers are bad because most of what's being sold as "agentic AI" isn't agentic AI.

The survivors - the 12% that make it to production - return 171% ROI. That's a massive return. The technology is real. The opportunity is real. The problem is purely on the vendor side: most of them are lying about what they've built.

Frequently Asked Questions

What is agent washing in AI?

Agent washing is the practice of rebranding existing chatbots, RPA tools, and basic automation scripts as "AI agents" or "agentic AI" without adding genuine autonomous capabilities. Gartner estimates only about 130 of the thousands of vendors claiming to sell AI agents are building genuinely agentic systems. The rest are charging agent-level prices for chatbot-level functionality.

How can I tell if an AI agent vendor is real or agent-washed?

Test for five things: persistent memory across sessions (not just conversation history), autonomous multi-step task execution without constant approval, real system integrations working today (not on a roadmap), specific failure handling with retry logic, and reference customers running in production for 6+ months. If any of these fail, you're likely looking at an agent-washed product.

Why do most agentic AI projects fail?

88% of AI agents fail to reach production. The primary causes are agent washing (buying chatbots sold as agents), unclear success criteria, missing governance frameworks, infrastructure costs running 3-5x initial projections at production scale, and lack of technical oversight during vendor evaluation. The 12% that succeed share common traits: named ownership, scoped criteria, and willingness to iterate.

How much does agent washing cost enterprises?

The agentic AI market reached $10.86 billion in 2026, with agentic AI representing 10-15% of enterprise IT budgets. With 76% of agent deployments failing within 90 days and 40% of projects projected to be cancelled by 2027, billions are being wasted on solutions that don't deliver genuine agentic capabilities. Individual companies typically waste 30-80k per failed agent-washed platform purchase.

Should I hire a fractional CTO to evaluate AI agent vendors?

If you're a non-technical founder or your team lacks deep AI engineering experience, yes. A fractional CTO who's built and evaluated agentic systems can assess a vendor in 2-3 days and save you months of wasted investment. The evaluation covers architecture review, integration testing, governance assessment, and reference customer validation - things that can't be assessed from a sales demo alone.