The AI Code Review Crisis: Why Your Senior Engineers Are Drowning in Pull Requests

Your Senior Engineers Are Drowning in AI-Generated Pull Requests - and It's Your Fault

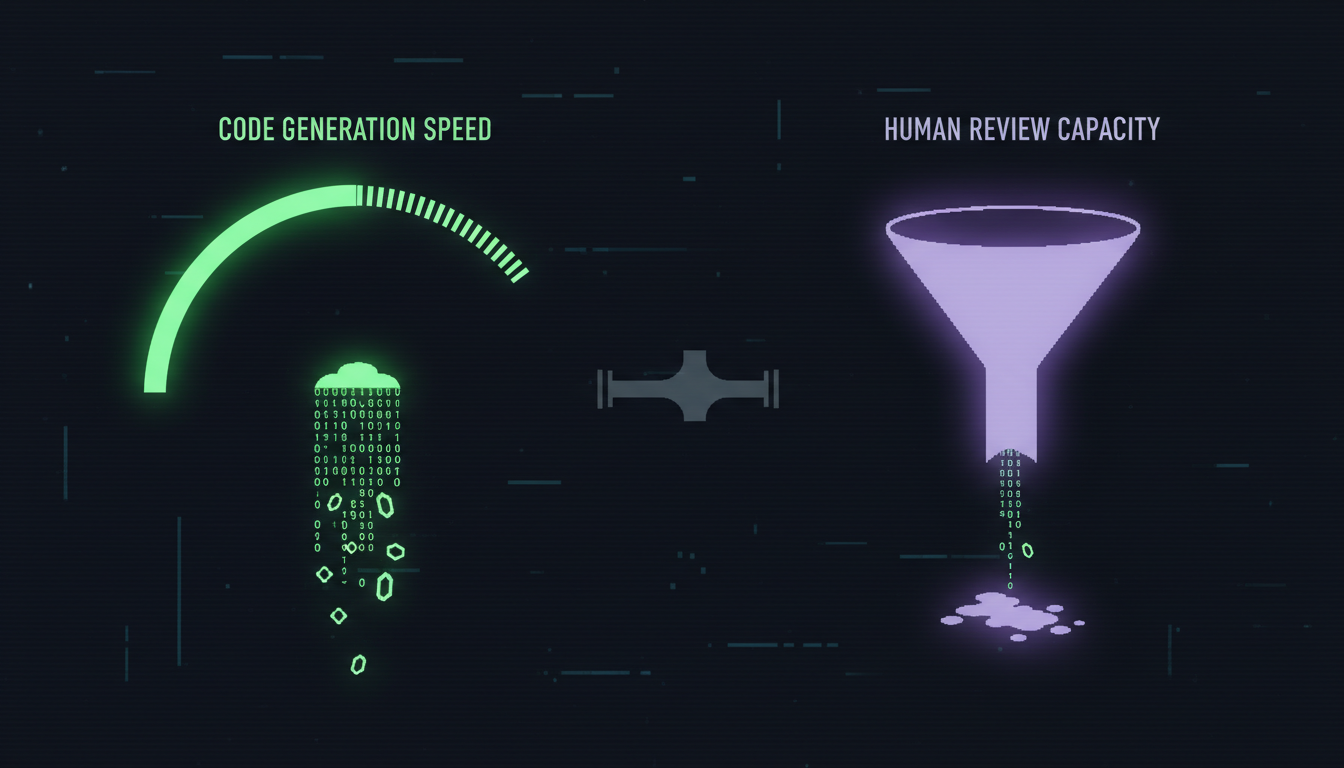

The AI code review crisis is the growing bottleneck where AI-accelerated code generation overwhelms human review capacity, causing PR review times to spike by up to 441%, burning out senior engineers, and creating a false sense of team productivity. If your engineering team adopted AI coding tools in 2025 and you haven't restructured your review process, your best people are quietly suffocating under a tsunami of machine-generated pull requests.

So.. I've been doing fractional CTO work for years now, and I've started seeing the same pattern repeat across almost every team that adopted AI coding tools aggressively in 2025. The developers are writing more code than ever. The PR count is through the roof. The velocity charts look incredible. And yet - the senior engineers look exhausted, the bug count is creeping up, and nobody can explain why "shipping faster" feels worse than before.

I'll tell you why. You sped up the engine but forgot to upgrade the brakes.

The Numbers Tell the Story

Faros AI analysed telemetry data from 22,000 developers across 4,000+ teams and found something that should alarm every CTO: while developers on AI-heavy teams complete 21% more tasks and merge 98% more pull requests, PR review time increased by 91%. The median time spent in PR review is up a staggering 441%.

Read that again. Four hundred and forty-one percent.

PR sizes grew by 154%. And here's the kicker - 31% more PRs are now merging with zero review. Not because teams decided to skip reviews. Because reviewers physically cannot keep up with the volume.

Faros calls this "Acceleration Whiplash" - the rest of the pipeline (review, testing, validation, incident response) was designed for human-paced output. AI coding tools shattered that pace, but nobody redesigned the pipeline to handle it.

The Trust Problem Makes Everything Worse

According to Sonar's 2026 State of Code Developer Survey, AI now accounts for 42% of all committed code - and developers expect that to hit 65% by 2027. But 96% of developers don't fully trust the functional accuracy of AI-generated code.

Think about what that means for your senior engineers. They're reviewing nearly double the volume of PRs, and they trust roughly none of it to be correct without thorough human verification. The burden has shifted from creation to verification. Your seniors aren't writing code anymore - they're auditing machine output, line by line, PR by PR, all day long.

A Harvard Business Review study from February 2026 put it bluntly: AI doesn't reduce work, it intensifies it. 83% of workers said AI increased their workload, and 62% reported burnout. The promised productivity gains lead enthusiastic adopters to take on more work because AI makes "doing more" feel possible. It's a recipe for cognitive overload.

The Burnout Is Already Here

A LeadDev survey found that 22% of developers are at critical burnout levels, with senior engineers reporting lower job satisfaction than juniors. Engineers using AI tools for coding experienced a 19.6% rise in out-of-hours commits - they're working evenings and weekends just to keep up with the review backlog.

And the HackerRank 2026 survey found 67% of developers report increased stress specifically related to AI code validation responsibilities. Not coding stress. REVIEW stress.

I've seen this first-hand in client teams. A senior backend engineer at a healthtech startup told me he was spending 70% of his day reviewing PRs from two junior developers who were using Cursor and Claude Code. He said he shipped more code in Q1 2026 than any quarter in his career - and felt more drained than any quarter in his career. He quit in April.

That's the real cost nobody's measuring.

Why This Happens: The Amdahl's Law Problem

There's a well-known principle in computing called Amdahl's Law: a system only moves as fast as its slowest component. You can parallelise and accelerate individual parts all you want - the bottleneck determines throughput.

AI coding tools accelerated the code writing phase by 2-5x. But code review, testing, and deployment are still running at human speed. Worse - they're now running SLOWER than before because the volume and complexity of what needs reviewing has exploded.

Here's what the pipeline looks like in most teams I audit:

| Pipeline Stage | Before AI Tools | After AI Tools (Unstructured Adoption) |

|---|---|---|

| Code writing speed | Baseline | 2-5x faster |

| PR volume per developer | Baseline | +98% (Faros data) |

| PR size | Baseline | +154% larger |

| Review time per PR | Baseline | +441% median (Faros data) |

| Zero-review merges | Rare (policy violation) | +31% increase (backlog overflow) |

| Senior engineer time on reviews | ~30% of day | ~60-70% of day |

| Bug detection before production | Standard | Declining (review fatigue) |

The output doubled. The review capacity stayed the same. Something had to break - and it's your best people.

What Most CTOs Get Wrong

I've had this conversation with about a dozen CTOs in the last three months. Most of them made the same mistake: they adopted AI coding tools and measured the wrong thing.

They measured lines of code generated. PRs merged. Sprint velocity. Story points burned (which, as I've written before, are a rubbish metric to begin with). Everything looked amazing on the dashboard. The engineering team appeared to be shipping 2x faster.

But nobody measured: time-in-review per PR. Reviewer cognitive load. Review-to-merge ratio. Rework rate after merge. Senior engineer satisfaction. Out-of-hours work patterns.

When I audit teams as a fractional CTO, these are the first metrics I look at. Because the velocity dashboard can lie to you. The review queue doesn't.

The Structured Approach: How to Fix This

So, look, I'm not saying AI coding tools are bad. I'm saying unstructured adoption is bad. There's a massive difference between "we gave everyone Cursor licences and told them to go faster" and "we redesigned our development pipeline to handle AI-augmented output."

Here's what I recommend to every team I work with through our AI adoption service:

1. Add automated review gates BEFORE human review. Tools like CodeRabbit ($24/user/month), Qodo ($30/user/month), or SonarQube can catch 40-60% of issues before a human ever looks at the PR. Teams using these tools report 42-48% better bug detection with significantly less human effort. The AI code review market is growing from $2 billion to $5 billion by 2028 for a reason - it works.

2. Enforce PR size limits. If AI generates a 500-line PR, break it up. No human can meaningfully review a PR that large. Set a hard limit - 200 lines maximum per PR. Yes, this means more PRs, but each one is actually reviewable.

3. Require AI-generated code to be flagged. Your reviewers need to know which code was human-written and which was AI-generated so they can adjust their scrutiny level. Some teams use commit message conventions, others use PR labels. Pick a system and enforce it.

4. Rotate review load across the team. If your two most senior engineers are doing all the reviews, you've got a single point of failure and a burnout guarantee. Distribute review responsibility. Pair mid-level engineers with seniors on AI-generated code reviews as a training mechanism.

5. Measure what matters. Track time-in-review, reviewer load distribution, zero-review merge rate, and post-merge defect rate. If time-in-review is climbing while zero-review merges are increasing, you've got a crisis brewing.

| Approach | Unstructured AI Adoption | CTO-Led Structured Adoption (Metamindz) |

|---|---|---|

| AI tool rollout | Hand out licences, hope for the best | Pipeline redesign before tool deployment |

| Review process | Same as before, but with 2x the PRs | Automated gates + human review on filtered output |

| PR size management | No limits - AI generates whatever it wants | 200-line caps, mandatory decomposition |

| Code provenance | Nobody knows what's AI vs human | Flagging system for AI-generated code |

| Reviewer load | Seniors absorb everything | Distributed review with paired mentoring |

| Metrics tracked | Velocity, PRs merged, story points | Time-in-review, reviewer load, post-merge defects |

| Burnout risk | High - 67% report increased stress | Managed - review load capped and distributed |

The Tech DD Angle

One more thing worth mentioning. When we do technical due diligence for investors, the review process is now one of the first things we examine. If a startup's PR review metrics show a 400%+ spike in review time alongside a rising zero-review merge rate, that's a red flag. It means the team is shipping code that hasn't been properly verified.

Investors are increasingly asking about this. 70% of private investors now require tech DD from digital startups before committing capital, and the question "how do you handle AI-generated code review" is becoming standard. If your answer is "we trust Cursor" or "our seniors handle it," that's not going to fly.

The Bottom Line

AI coding tools are brilliant. I use them daily. My team uses Claude Code, Cursor, and GitHub Copilot across different projects. We built MintyAI in 2 weeks using structured AI workflows - something that would have taken 4-5 months traditionally.

But the difference between that success and the burnout crisis I'm seeing across the industry is one word: structure. We redesigned our pipeline before we scaled AI output. Most teams didn't. They just hit the accelerator without checking if the road was built for it.

If your senior engineers are spending more than 50% of their time reviewing PRs, if your zero-review merge rate is climbing, if your best people are looking tired - the AI tools aren't the problem. Your process is.

Fix the pipeline. Or lose the people who make your pipeline worth having.

Frequently Asked Questions

Why has PR review time increased so dramatically with AI coding tools?

AI coding tools accelerate code generation by 2-5x, but the review, testing, and validation pipeline wasn't redesigned to match. Faros AI data from 22,000 developers shows PR volume up 98% and PR sizes up 154%, while human review capacity remained static - causing median review time to spike 441%.

How can teams reduce the AI code review burden on senior engineers?

Deploy automated review gates using tools like CodeRabbit or Qodo before human review, enforce 200-line PR size limits, flag AI-generated code for adjusted scrutiny, rotate review load across the team, and track reviewer-specific metrics like time-in-review and load distribution rather than just aggregate velocity.

Are AI code review tools effective at catching issues?

Yes. Teams using AI code review tools like CodeRabbit, Qodo, and SonarQube report 40-60% faster reviews and 42-48% better bug detection rates. The AI code review market is projected to grow from $2 billion to $5 billion by 2028. These tools are most effective as a first-pass filter before human review, not as a replacement for it.

What metrics should CTOs track to spot the AI code review crisis early?

Track time-in-review per PR, reviewer load distribution across team members, zero-review merge rate, post-merge defect rate, and out-of-hours commit patterns. If time-in-review is climbing while zero-review merges increase, you have an overloaded review pipeline that needs structural intervention.

How does the AI code review crisis affect technical due diligence?

Investors conducting tech DD now examine PR review metrics as standard. A 400%+ spike in review time alongside rising zero-review merges signals unverified code reaching production - a significant red flag. 70% of private investors require tech DD before committing capital, and AI-generated code review processes are becoming a core assessment area.