AI Compliance Documentation: Best Practices

AI Compliance Documentation: Best Practices

Want to avoid hefty fines and legal headaches from AI regulations? Here's the deal: AI compliance documentation is no longer optional. It's your proof that your AI systems are playing by the rules. Regulators don't care about fancy policies - they want hard evidence: logs, testing records, and audit trails that show you're doing what you claim.

Key Points to Know:

- Why It Matters: Non-compliance with AI laws like the EU AI Act could cost you up to €35m or 7% of global turnover. Most companies aren't ready - by 2025, only 25% had proper AI governance in place.

- What You Need: A central AI system inventory, risk assessments, technical documentation, bias testing, and clear oversight protocols. High-risk systems (e.g., hiring or credit scoring) need extra scrutiny, like annual validations and constant monitoring.

- How to Stay on Top: Assign clear roles (e.g., CTO or AI governance committee), use tools like Model Cards for documentation, and automate wherever possible. Regular reviews and drift monitoring are essential.

Bottom line? Start treating documentation like code: version control, automated updates, and audit-ready records. It's not just about ticking boxes - it's about protecting your business and building trust. Let’s dive into how to make it happen.

Documentation Standards for AI Systems | Exclusive Lesson

sbb-itb-fe42743

Setting Up Governance for Documentation

Getting documentation governance right is like building a bridge between AI policies and the real-world application of those policies. But here's the kicker: only 27% of boards have formally included AI governance in their committee charters, even though 62% regularly discuss AI matters [1]. That gap can lead to serious problems, especially when accountability isn't clear. Peter Vogel, AI Strategy Director at Helium42, puts it perfectly:

"Governance is not something separate that happens to your AI systems. It is something built in from day one." [5]

Let’s break down how to assign responsibilities and set up solid record-keeping processes.

Defining Roles and Responsibilities

Start by appointing a single executive - often a fractional CTO or COO - who will take ownership of the governance framework and keep the board informed about AI risks [7]. Without someone clearly in charge, governance efforts can easily remain just a nice idea rather than a functional system.

Next, form a cross-functional AI governance committee. This group should bring together Legal, Risk, Data, and Product teams [5][8]. Their job? Setting governance standards, running bias audits, and keeping an up-to-date inventory of AI systems. It’s also ideal to have the Chief Risk Officer or Chief Compliance Officer brief the board on documentation needs and assign compliance leads across the organisation [1].

For day-to-day operations, appoint Data Stewards. These are the experts who manage the data lifecycle for specific AI projects - typically one person for every two to three projects [6]. Their responsibilities include conducting pre-training audits, tracking data provenance, and keeping an eye on input and output quality. Fun fact: 61% of data governance failures happen during the data prep stage, not during model design [6]. So, having the right people in place early is key.

To avoid confusion, use a RACI matrix. This handy tool clarifies who is Responsible, Accountable, Consulted, and Informed for tasks like approving new tools, reviewing vendor contracts, or monitoring model drift [7]. For instance, your CTO might be accountable for signing off on a new AI tool, the Security Lead responsible for the technical review, Legal consulted on the terms, and the Business Owner simply informed.

Once roles are sorted, the next step is setting up proper record-keeping protocols.

Creating Record-Keeping Processes

You’ll need a solid record-keeping framework that covers training data quality, input data quality, and output validation [6].

Start with a centralised AI system inventory. This should include every AI system - whether it’s built in-house, purchased, or even “shadow AI” that’s been flying under the radar. Each system should have a unique ID, a business owner, a risk tier, documented data inputs, and a record of its last validation date [1][7]. Interestingly, many organisations discover two to three times more AI models than they expected during their first inventory check [1].

Standardise your documentation by using tools like Model Cards (which outline architecture and performance) and Data Cards (which track dataset provenance and bias) [8][3]. Treat these documents like code - use version control systems such as Git to track changes, ensuring you’ve got an audit trail for every update and approval [1][8].

To stay ahead of any issues, set automated drift detection thresholds. For example, a ±5% deviation from baseline performance could trigger a re-validation process [1]. Organisations with strong data governance practices report 34% faster AI deployment and 47% fewer model retrainings [5][6]. Take the example of a global data provider in November 2025: by using Acceldata's platform, they cut their data quality processing time from 22 days to just 7 hours, all while scaling compliance checks across 500 billion rows of data [9].

Lastly, document human-in-the-loop protocols. These should clearly outline which decisions need manual review or approval [7][8]. This isn’t just a good habit - it’s becoming a legal requirement for high-risk systems under frameworks like the EU AI Act. Skipping this step could land you in hot water down the line.

What to Include in AI Compliance Documentation

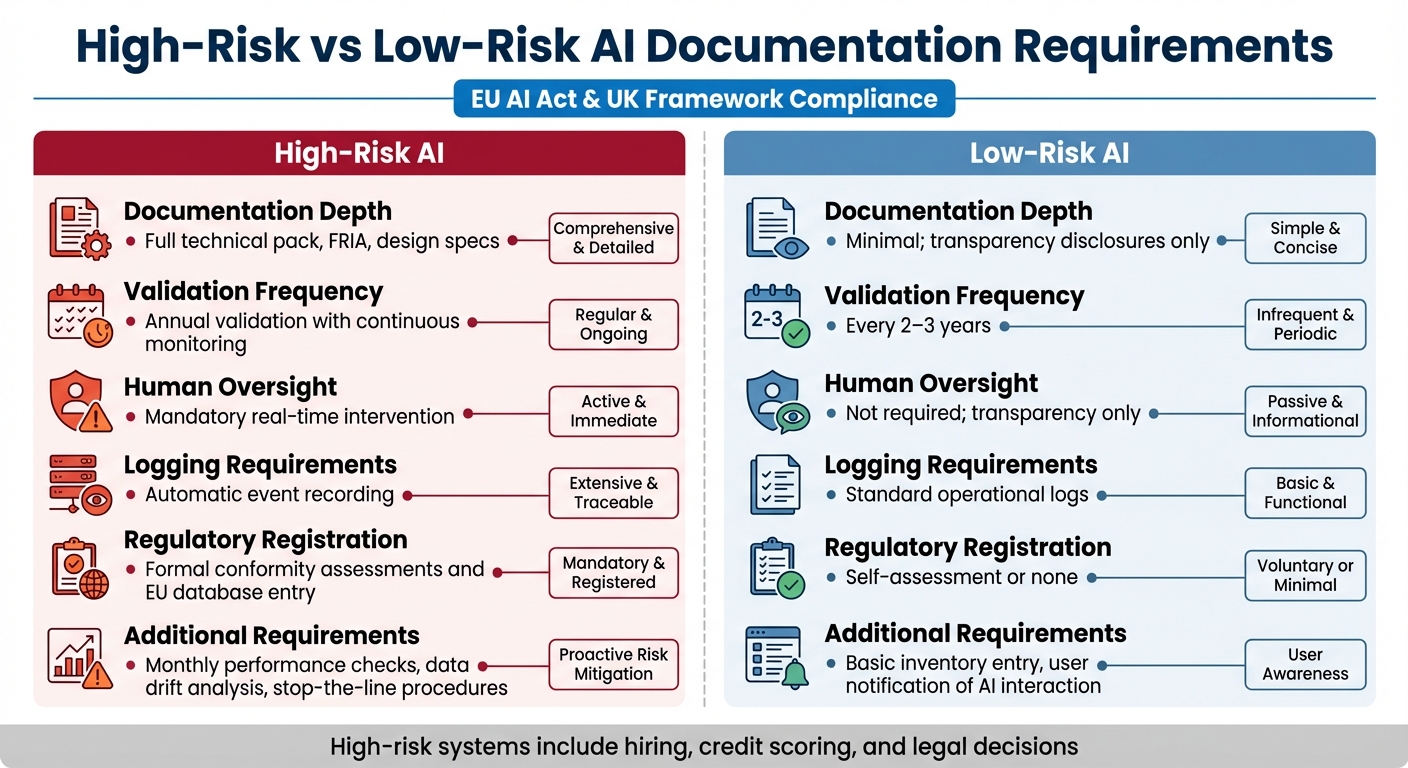

High-Risk vs Low-Risk AI Documentation Requirements Comparison

Once you’ve set up governance, it’s time to get stuck into your compliance documentation. Regulators aren’t just looking for policies on paper anymore - they want proof that your AI systems operate responsibly. This means things like operational logs, review records, and validation reports are now must-haves. Interestingly, only 38% of mid-market firms have formal AI risk registers [10], which suggests many organisations are struggling to fully demonstrate compliance.

Required Documentation Elements

Your compliance documentation needs to cover seven key areas. These will help auditors or regulators piece together the entire lifecycle of your AI systems.

- Central AI System Inventory: Start with a clear register of all your AI systems. This should include details like unique IDs, ownership, risk classification, and data inputs [1][4]. This is also a great way to uncover "shadow AI" - systems flying under the radar. Organisations often find two to three times more models than they expected during their first audit [1][4].

- Risk Assessments: For high-risk systems, you’ll need to document assessments like a Data Protection Impact Assessment (DPIA) under GDPR and a Fundamental Rights Impact Assessment (FRIA) as required by the EU AI Act [4][10]. Violations of GDPR’s Article 22 (on automated decision-making) account for 34% of enforcement actions involving AI [10]. And let’s not forget, non-compliance with the EU AI Act could cost you up to €30 million or 6% of global turnover [10].

- Technical Documentation: This should detail your system’s architecture, the origin of your training data, design specs, and performance metrics like accuracy and recall [1][4]. Don’t just take your vendor’s word for it - ask for things like model cards, security certifications (e.g., ISO 27001 or SOC 2), and incident notification SLAs [4].

- Traceability and Logging: Automate logs to capture timestamps, actor IDs, model versions, and policy outcomes [3][4]. For high-risk systems, keep these logs for the system’s entire operational life plus any standard retention periods [4].

-

Human Oversight Protocols: Clearly document when and how humans can intervene. Define roles, escalation paths, and provide evidence of operator training. As Matt Chappell from Cognisys puts it:

"A process where a human technically has the ability to override a system but never does... does not satisfy Article 14 [of the EU AI Act]." [4]

- Bias and Fairness Testing: Record the results of your bias tests, especially those measuring the impact on protected groups. Also, show the steps you’ve taken to address any biases you’ve found [1]. This is becoming a legal requirement in many places.

- Change Management Records: Track every modification with version control logs, pre-deployment approvals, and assessments of how changes might impact the system [1]. Treat your documentation like code - every change should leave a trace.

These elements are your starting point, but the specifics will depend on the risk level of your AI systems. Organisations that get ahead with solid governance often find they spend three to five times less on remediation compared to those that reactively scramble to fix issues [10].

High-Risk vs Low-Risk AI Documentation Requirements

Not every AI system needs the same level of documentation. Both the EU AI Act and the UK’s emerging frameworks take a risk-based approach.

High-risk systems - like those used in hiring, credit scoring, or legal decisions - require detailed documentation. For these, you’ll need a full technical pack (often called Annex IV under the EU AI Act), a FRIA, detailed design specs, and real-time human intervention protocols [1][4][10]. These systems also need annual validation, constant monitoring, and monthly performance checks, including data drift analysis. Automatic event logging is non-negotiable, and these systems must also be registered in the EU database [4][10].

Low-risk or limited-risk systems, on the other hand, focus mainly on transparency. For instance, if you’re using AI in a chatbot, you just need to tell users they’re interacting with AI and keep a basic inventory entry. Reviews for these systems typically happen every two to three years, and standard operational logs will suffice instead of heavy-duty oversight [10].

Here’s a quick comparison:

| Feature | High-Risk AI | Low-Risk AI |

|---|---|---|

| Documentation Depth | Full technical pack, FRIA, design specs [1][4] | Minimal; transparency disclosures only [10] |

| Validation Frequency | Annual validation with continuous monitoring [1] | Every 2–3 years [1] |

| Human Oversight | Mandatory real-time intervention [4][10] | Not required; transparency only [10] |

| Logging Requirements | Automatic event recording [4] | Standard operational logs [10] |

| Regulatory Registration | Formal conformity assessments and EU database entry [4][10] | Self-assessment or none [10] |

To make sure nothing slips through the cracks, run a "shadow AI" audit across your organisation, covering procurement, IT, and business units. Assign clear accountability - don’t just say "the team" is responsible. Name specific individuals for each risk and mitigation control [10]. For high-risk systems, you’ll also want a "stop-the-line" procedure, allowing a human to pause or override the system if needed [4].

The key takeaway? Tailor your documentation to the risk level of your AI systems, but make sure every system is properly recorded and categorised [1][4].

How to Create and Organise Documentation

Once you've identified what needs documenting, the next step is ensuring it’s practical and easy to use. A well-organised compliance folder can make audits far less stressful. The trick? Standardise your approach to documentation and automate the repetitive bits, freeing up your team to focus on critical decisions.

Standardising Documentation Practices

Start by establishing a single source of truth for all your AI systems. This means creating a central inventory where every model has a unique ID, a specific owner (not just "the team"), a risk classification, details of data inputs, and set validation dates [1][4]. By standardising these fields across projects, you’ll have a clear overview of what exists, who’s accountable, and when updates are due. This setup isn’t just tidy - it ensures you don’t miss anything crucial.

Next, match the level of documentation to the system’s risk. High-risk systems will need detailed development records, validation reports, and design specs. On the other hand, low-risk systems might only require a scope memo [1]. Beyond the technical jargon, always document the reasoning behind your choices. For instance, why did you choose a "black box" model over a more interpretable one? Regulators care about your logic just as much as your architecture [2].

To avoid duplicating effort, adopt a "link, don’t copy" method. Instead of recreating existing artefacts, reference documentation from frameworks like ISO 27001, SOC 2, or GDPR DPIAs. This approach keeps your evidence interconnected and avoids unnecessary repetition. Also, treat your documentation like code - use version control to track every iteration. Tools like Git can help ensure every model update is traceable [1]. As Rebecca Leung from RiskTemplates puts it:

The gap between 'we have a policy' and 'we can prove compliance' is where enforcement actions live [1].

Once your documentation process is consistent, automation can take it to the next level.

Using Automation for Documentation

Automation builds on standardised practices by keeping your records up-to-date and audit-ready. While it can handle routine tasks, human review is still essential to ensure everything checks out. Tools like AgentID can automatically log AI operations, test results, and access records, creating a real-time audit trail with minimal manual input [3][11]. You can also embed compliance checks into your CI/CD pipeline, flagging non-compliant code before it goes live [11]. This "compliance rules as code" approach turns your policies into automated checks, helping you avoid nasty surprises during audits.

For ongoing monitoring, automated drift detection can alert you when performance veers off course (e.g., a ±5% deviation from baseline), prompting a re-validation [1]. Intelligent document processing tools can also help by organising and extracting information from existing compliance records, maintaining version control, and building reliable audit trails [12]. With the AI compliance automation market valued at £4.8 billion and projected to hit £14.4 billion by 2033, these tools are clearly gaining traction [11].

But let’s be clear - automation isn’t a magic wand. Human oversight remains critical. Treat AI-generated compliance outputs as a starting point, not the final say [13]. All automated results should be reviewed by a qualified professional. Running tabletop exercises with scenarios like "bias complaints" or "incorrect outputs" can test whether your oversight processes are up to scratch [4]. Ultimately, documentation isn’t just about having policies - it’s about proving you follow them.

Monitoring and Maintaining Documentation

Once you've standardised and automated your documentation, keeping it accurate requires regular reviews and consistent monitoring. AI systems evolve over time - models can drift, regulations change, and what was compliant yesterday might not hold up tomorrow. Staying on top of these shifts through ongoing checks is what keeps compliance grounded in reality, rather than just looking good on paper.

Setting Review Schedules

How often you review should depend on the level of risk involved. For high-risk systems, aim for annual full validations alongside continuous monitoring. Medium-risk systems might need reviews every 18 months, while low-risk systems can stretch to every 2–3 years [1]. But it’s not just about the calendar - events like new data sources, expanded user bases, performance changes beyond ±5%, or updates in regulations should trigger immediate updates to your documentation [1].

Matt Chappell from Cognisys puts it perfectly:

"Ready is not a feeling. It is a set of artefacts that exist, are approved, are versioned and are linked to a specific system ID with a review date" [4].

With the EU AI Act's high-risk system requirements coming into play by August 2026, there's no time to drag your feet [14]. Once you've nailed down your review schedules, continuous monitoring bridges the gap to ensure nothing slips through the cracks between formal reviews.

Continuous Monitoring and Change Management

In between scheduled reviews, keep an eye on performance, data drift, and bias - monthly or quarterly, depending on the system's risk level [1]. If drift detection flags a performance shift, such as exceeding ±5% from your baseline, that’s your cue to update documentation and possibly re-validate the model [1]. Importantly, log everything - whether it's a flagged issue or a clean report. Regulators want to see you're actively monitoring, not just scrambling when things go wrong [1].

These efforts tie back to the governance strategies discussed earlier. Incorporate AI incident reports and change logs into your existing security workflows. Document every issue, the actions taken, and the reasoning behind any changes [1][4]. And don’t forget to set up a "stop-the-line" procedure, so you can immediately pause or override high-risk systems if violations are detected [4]. This creates a living audit trail, showing you're not just ticking boxes but actively managing compliance.

Demonstrating Compliance Through Documentation

Keeping your documentation in order is more than just good housekeeping - it’s your proof to regulators, customers, and investors that your AI systems are doing exactly what they’re supposed to. As Rebecca Leung, Founder of RiskTemplates, aptly says:

Having an AI policy isn't compliance - having proof you follow it is [1].

Think of governance as the roadmap for how you operate, and compliance as the evidence that you’re sticking to the route.

Preparing for Audits

When regulators come calling, they’re not just looking for policies; they want proof - actual evidence that your policies are being followed day-to-day [1]. To stay ahead, start with a detailed AI model inventory. This should include system names, versions, providers, purposes, data inputs, and risk classifications [4]. Don’t be surprised if you uncover far more models than you expected - organisations often find two to three times more models during initial reviews, thanks to undocumented pilot tools and AI features embedded in other software [1][4].

Your audit file needs to be thorough, covering seven key layers:

- Model inventory

- Risk classification

- Development and design records

- Validation and testing results

- Approval and change management logs

- Ongoing monitoring data

- Board-level oversight [1]

The golden rule? Make it reconstructible. A third party should be able to follow the chain from your policies to how they’re implemented, how the system behaves in real-time, and how exceptions are handled [3]. For high-risk systems, frameworks like SR 11-7 require independent validation by someone outside the development team [1].

The stakes are high. In March 2024, JPMorgan Chase had to cough up $250 million in penalties from the OCC due to gaps in their risk management approach [1]. Citibank, on the other hand, spent five years (2020–2025) under OCC consent orders for similar issues [1]. Running mock audits or tabletop exercises with your internal teams can be a lifesaver, helping you ensure bias complaints and system drift are being handled effectively [1][4].

A solid audit file doesn’t just keep regulators happy - it also sets the stage for gaining trust through certifications.

Building Trust with Certifications and Transparency

Certifications like ISO/IEC 42001 for AI management systems, ISO 27001, and SOC 2 Type II for operational controls give you that extra layer of credibility [1]. If you’re working on high-risk AI systems in the EU, you’ll also need a CE marking and a Declaration of Conformity under the EU AI Act [4].

But certifications are only part of the story. Transparency matters too. Tools like Model Cards and Data Cards can help you document the nitty-gritty details - model architecture, training data origins, intended uses, and known limitations [3][8]. Sharing summaries of Fundamental Rights Impact Assessments (FRIA) or Algorithmic Impact Assessments (AIA) shows that you’ve seriously considered the risks to individuals before rolling out your systems [4][8].

The numbers tell a compelling story. Organisations that prioritise governance see a 2.5x higher return on their AI investments [5]. On the flip side, those that don’t often face hefty costs - data breaches alone average $4.45 million [15]. Yet, as of early 2025, only 25% of organisations had fully implemented AI governance programmes, and just 38% of mid-market firms kept formal AI risk registers [1][10]. Non-compliance penalties can be brutal too - up to €30 million or 6% of global annual turnover [10]. With high-risk system requirements kicking in by August 2026, it’s smart to start preparing now. You’ll likely need at least 5–6 months to pull together all the documentation and conduct dry-run audits [4].

For those who need a bit of extra help, there are experts ready to guide you through the process.

How Metamindz Supports Compliance Documentation

Metamindz's Vibe-Code Fixes steps in with CTO-level oversight for AI-generated code, ensuring it’s both reliable and compliant before it goes live. Every project is led by an active CTO who rolls up their sleeves to conduct architecture reviews and code audits. This hands-on approach provides the independent validation that regulators want [1].

If your organisation is gearing up for technical due diligence or a merger, Metamindz also offers "tech health" reports. These reports include actionable recommendations to address security gaps, bugs, and scalability issues - giving you the validation trail required by frameworks like SR 11-7 [1]. Founded by Lev Perlman, who’s also a Technical Advisor at Google for Startups and Loyal VC, Metamindz works with startups and enterprises across the UK, US, Europe, and the Middle East. Pricing is transparent too - technical due diligence starts at £3,750, while fractional CTO services are available from £2,750 per month.

Conclusion

AI compliance documentation is all about proving you practice what you preach. As Rebecca Leung, Founder of RiskTemplates, aptly says:

Having an AI policy isn't compliance - having proof you follow it is [1].

The real challenges arise in bridging the gap between having a policy and demonstrating compliance. And with penalties under the EU AI Act potentially hitting €35 million or 7% of global turnover [16], the consequences of falling short are serious.

The numbers tell the story. Organisations with thorough documentation frameworks have slashed compliance costs by 68% and sped up model deployment from 127 days to just 34 [16]. In legal disputes, those with solid records win 82% of the time, compared to just 31% for those with poor documentation [16]. Yet, a staggering 61% of AI systems submitted for EU conformity assessments fail their first review due to weak documentation [16]. These stats highlight the urgency of getting this right.

With high-risk system requirements kicking in by August 2026, now’s the time to act. Start by building a living inventory of your AI models, categorise risks systematically, and move beyond static policies to create audit-ready evidence - think logs, version histories, and oversight records that can stand up to third-party scrutiny [1][3]. Proper documentation doesn’t just tick regulatory boxes; it lays the groundwork for scalable, trustworthy AI that earns the confidence of regulators, investors, and users alike.

Key Takeaways

- AI Model Inventory: This is your starting point. Keep a dynamic record of every system, complete with details like ownership, purpose, risk classification, and version [1][4].

- Risk Tiering: Use a structured approach to risk. High-risk systems should be validated annually by independent reviewers, while low-risk ones can be checked periodically [1].

- Seven-Layer Framework: Build compliance into your operations using these layers: Model Inventory, Risk Classification, Development/Design Records, Validation/Testing Results, Approval/Change Logs, Ongoing Monitoring Data, and Board-Level Oversight [1]. Make sure each layer generates real, operational evidence - logs, version histories, and human oversight records - not just written policies [3].

- Automation: Leverage MLOps platforms to automate compliance updates. By pulling technical metadata straight from your code and logs, you can ensure documentation stays up-to-date whenever a model is retrained, cutting down on manual work and preventing documentation drift [16].

- Reconstructibility: Your documentation should be airtight. A third party - whether it’s an auditor, regulator, or potential buyer - should be able to trace everything from your policies to real-time system behaviour and how you handle exceptions [3]. That’s the gold standard for compliance.

The clock’s ticking, and the stakes are high. But with the right steps, you can turn compliance into a strength, not just a checkbox exercise.

FAQs

What’s the quickest way to build an AI system inventory?

To move quickly, start by establishing clear policies and documentation for AI usage, compliance, and data governance. A structured roadmap - like a week-by-week compliance plan - can keep things on track and manageable. Having a risk and governance framework in place is key. This ensures your AI systems are properly documented and regularly monitored, which helps minimise risks and keeps everything compliant. Pairing these efforts with expert advice can make the process smoother and more organised, ensuring nothing important is overlooked.

How do I decide if my AI system is “high-risk” under the EU AI Act?

To figure out whether your AI system falls into the "high-risk" category under the EU AI Act, you’ll need to dig into its purpose, potential impact, and the context in which it’s used. The key idea here is that high-risk systems are those that could have a major effect on safety, fundamental rights, or legal compliance.

Start by checking Annex III of the Act, which lays out specific criteria for high-risk systems. Pay close attention to sectors like healthcare, where the stakes are naturally higher. Once you've identified potential risks, document the steps you’re taking to reduce them. This might include things like safety protocols or ethical safeguards.

If your system is classified as high-risk, you’ll need to meet some pretty tough requirements. These include keeping detailed documentation, ensuring there’s human oversight, and setting up a system for continuous monitoring. It’s not just about ticking boxes - it’s about making sure your AI operates responsibly and transparently.

What evidence do auditors expect beyond policies?

Auditors often need more than just written policies to confirm AI compliance. They look for tangible evidence, such as records of system deployment, details of controls that have been implemented, risk assessments conducted, and monitoring activities in place. Additionally, they’ll want to see how incidents are handled and review documentation of the system's behaviour over time. These elements show that compliance isn’t just a box-ticking exercise but something actively managed and maintained in practice.