AI Data Governance: Security and Compliance

AI Data Governance: Security and Compliance

AI systems are powerful but risky. They expose organisations to new security threats and regulatory challenges. For instance, attackers can reconstruct personal data with 95% accuracy using model inversion attacks, and legal issues like the Workday lawsuit in 2024 show how poor governance can lead to discrimination claims. On top of that, new laws like the Data (Use and Access) Act 2025 and the EU Artificial Intelligence Act bring hefty fines - up to £17.5 million or €35 million, depending on your location.

To stay ahead, you need to:

- Lock down data access: Use Role-Based Access Control (RBAC) and tools like encryption and federated learning.

- Automate governance: Tools for real-time monitoring, bias detection, and compliance checks are game-changers.

- Set clear policies: Define data retention, minimise risks, and ensure human oversight.

- Embed governance in workflows: Use MLOps pipelines, automate audits, and add kill-switches for faulty models.

The takeaway? AI governance isn’t just about avoiding fines - it’s about protecting your data, reputation, and customers. Let’s dive into how you can make it work.

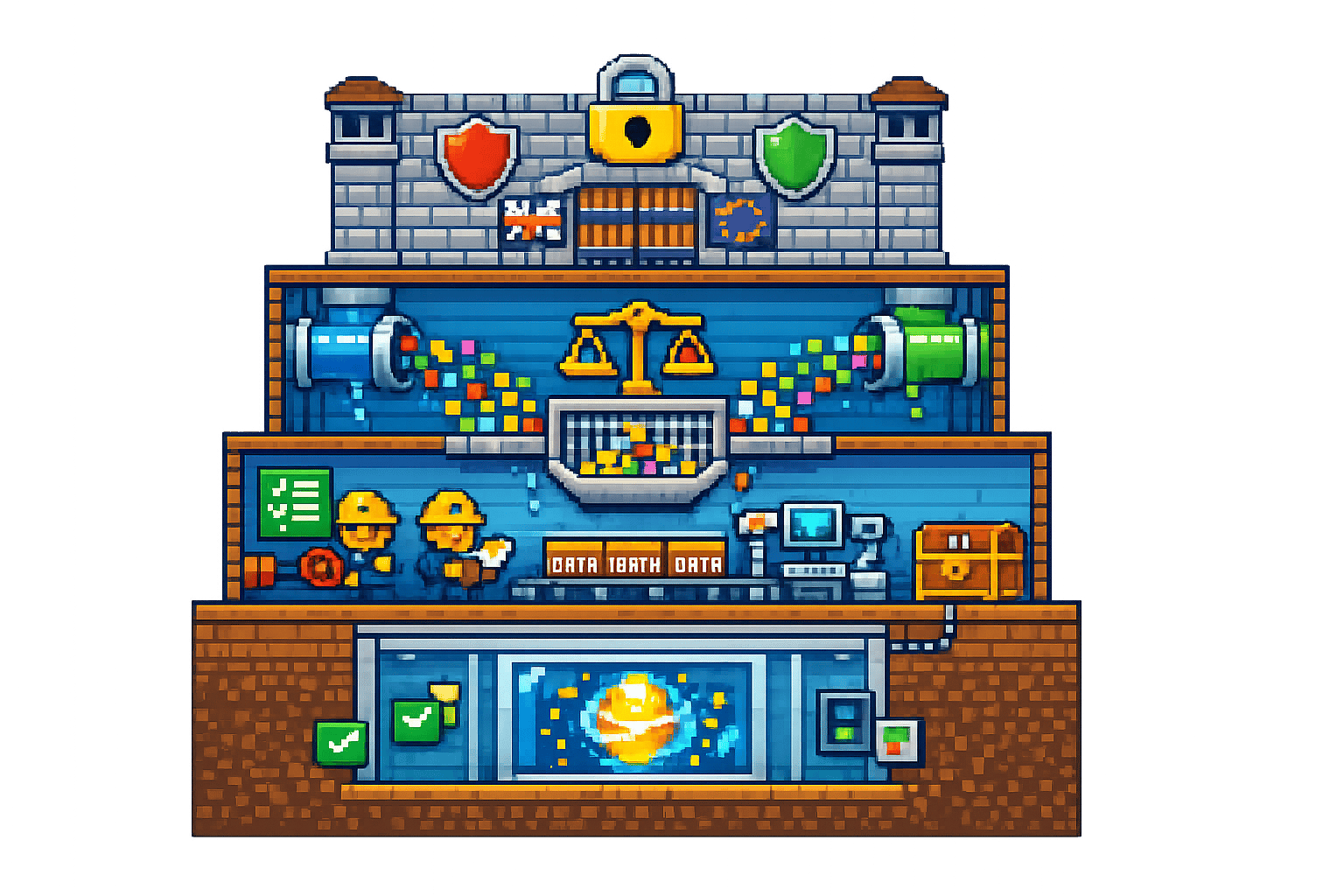

AI Data Governance: Key Statistics, Risks, and Regulatory Fines

Securing AI Systems: Protecting Data, Models, & Usage

sbb-itb-fe42743

Common Challenges in AI Data Governance

Organisations diving into AI face a maze of governance issues that stretch beyond the usual IT security concerns. These challenges often stem from three key areas: technical vulnerabilities that put sensitive data at risk, a regulatory environment that's constantly evolving, and ongoing problems with the quality of datasets that drive AI decisions. Let's unpack where these issues arise and the risks they bring.

Data Security and Privacy Risks

AI systems introduce new vulnerabilities that traditional security tools weren't built to handle. For instance, model inversion attacks have shown how attackers can reconstruct sensitive data from a model's outputs. One study revealed that face images could be reconstructed and matched to individuals in the training data with 95% accuracy [1]. Similarly, attackers have inferred patients' genetic biomarkers from a medical AI model by observing its inputs and outputs - no direct access to the data was needed [1].

"AI systems can exacerbate known security risks and make them more difficult to manage." – Information Commissioner's Office (ICO) [1]

Another challenge is data sprawl during the training process. Large datasets often get copied and stored across different environments, making it easier for unauthorised access to slip through the cracks [1]. This is why 90% of organisations are ramping up their privacy programmes to address AI-specific risks, with 93% planning to allocate more resources to privacy and data governance in the next two years [8].

Meeting Changing Regulatory Requirements

The regulatory landscape isn't just shifting - it’s doing so at breakneck speed. The Data (Use and Access) Act 2025, effective from 19 June 2025, updates UK GDPR to tackle AI-specific risks. Fines can hit a hefty £17.5 million or 4% of global annual turnover [6]. Across the Channel, the EU's Artificial Intelligence Act raises the stakes even higher, with penalties reaching €35 million or 7% of worldwide annual turnover for severe breaches [7].

Navigating compliance is tricky because the legal basis for processing data often changes depending on the AI lifecycle stage. For example, research and development might rely on one lawful basis, while deployment may require another [4]. Organisations also need to carry out Data Protection Impact Assessments (DPIAs) for high-risk activities like large-scale profiling or monitoring [2]. The DUAA introduces "Recognised Legitimate Interests" for specific cases like crime prevention, but these allowances are tightly defined [6].

The consequences of poor governance are stark. In 2024, Workday Inc. faced a class-action lawsuit after its AI-powered recruitment tool allegedly discriminated against candidates aged 40 and above [7]. This highlights how technical missteps can quickly spiral into legal and reputational disasters.

"AI compliance is not a box-ticking exercise, but a practical safeguard that helps organisations understand what they can confidently say to customers about how AI is used." – Data Driven Legal [7]

Data Quality and Bias Issues

Beyond compliance, keeping data accurate and unbiased is critical to avoid reinforcing harmful patterns. Poor data quality leads to flawed AI outputs and perpetuates existing biases. For example, recruitment tools may unfairly reject female candidates, or image recognition systems might assign derogatory labels to photos of ethnic minorities. In these cases, the AI isn't malfunctioning - it’s simply mirroring the biases in its training data [2].

Another headache is statistical inaccuracy. Outdated or incorrect input data can lead to flawed inferences, breaching the UK GDPR's accuracy principle [9]. Over time, even well-trained models can suffer from concept drift, where changes in demographics or behaviours reduce their accuracy [2]. This makes ongoing monitoring essential - yet many organisations lack the resources to do it effectively.

"The deployment of an AI system to process personal data needs to be driven by evidence that there is a problem, and a reasoned argument that AI is a sensible solution to that problem, not by the mere availability of the technology." – Information Commissioner's Office (ICO) [2]

Technical pitfalls like overfitting add to the mix. When a model focuses too much on specific details in its training data, it struggles to generalise to new data. This not only affects performance but also heightens the risk of privacy attacks like model inversion [1]. Then there are adversarial examples - inputs deliberately designed to mislead AI, such as stickers on road signs or distorted face images. These can cause AI systems to misclassify data, creating both security and accuracy challenges [1].

Practical Solutions for AI Data Governance

Tackling AI data governance issues means focusing on security, compliance, and operational efficiency. These strategies directly address the challenges previously discussed, offering practical ways to safeguard data and ensure smooth AI operations.

Setting Up Data Access Controls

The backbone of secure AI governance is Role-Based Access Control (RBAC). This involves carefully defining who can access specific data, when they can do so, and for what purpose. For AI systems making sensitive decisions, organisations need to assign roles, enforce human oversight, and establish clear escalation protocols. This helps prevent unauthorised access to training datasets or model outputs.

Adding Privacy-Enhancing Technologies (PETs) further strengthens protection. Tools like homomorphic encryption, differential privacy, and federated learning allow AI models to learn from data without exposing sensitive information. For instance, federated learning lets hospitals train a shared diagnostic AI model without exchanging patient records - each hospital keeps its data on-site while contributing to the model.

Another critical step is isolating machine learning environments. These development spaces can pose supply chain risks, so using virtual machines or containers to separate them from regular IT infrastructure is a smart move. Additionally, API rate-limiting can help reduce the risk of privacy attacks.

"AI systems introduce new kinds of complexity not found in more traditional IT systems... Since AI systems operate as part of a larger chain of software components, data flows, organisational workflows and business processes, you should take a holistic approach to security." – Information Commissioner's Office (ICO) [1]

Using AI to Automate Governance Tasks

AI can also take on some of the heavy lifting when it comes to governance. Automated tools for data tracing and classification can map out where personal data is being used across the AI system, identifying sensitive information and ensuring compliance with regulations like the right to erasure under UK GDPR. This is especially handy for handling data subject access requests (SARs).

Real-time compliance monitoring tools are another lifesaver. These systems continuously check AI activities against internal policies and send alerts when something’s off. This beats manual audits any day, particularly as 93% of enterprises plan to boost their investment in privacy and data governance over the next couple of years [8].

API anomaly detection is another layer of defence. By tracking API queries for unusual patterns, organisations can spot potential privacy attacks early. Even tools like ServiceNow can help streamline processes like Data Protection Impact Assessments (DPIAs) and AI Impact Assessments (AIAs), improving collaboration and cutting down on delays [7].

Creating Clear Compliance Policies

Technical measures are just one side of the coin - policies are equally important. A strong privacy management framework, backed by senior technical leadership, is key. This framework should outline the lawful basis for processing activities under UK GDPR and include measures to prevent algorithmic bias and discrimination [3][5].

Data minimisation and retention policies are crucial too. Regularly review the relevance of personal data at every stage of the AI lifecycle. Techniques like differential privacy and federated learning can ensure only necessary data is processed. Standardised data deletion processes ("weeding") should also be in place to avoid keeping personal information longer than required [11][1].

Security needs to be baked into these policies from day one. Use RBAC, encrypt data both at rest and in transit, and employ continuous monitoring to detect potential breaches. Automated audit trails that log changes, security updates, and data access events are essential for accountability. This is especially critical given the EU AI Act, where serious violations can lead to fines of up to €35 million or 7% of worldwide annual turnover, whichever is higher [7].

Lastly, don’t overlook third-party risks. The "NumPy" vulnerability from January 2019 is a cautionary tale about the importance of vetting external libraries. Regularly assess the security of open-source tools and subscribe to advisories for updates on vulnerabilities [1].

Building Governance into AI Workflows

AI governance only proves its worth when it's put into action. With 78% of companies now incorporating AI into at least one business function, it's clear that governance can't just be a theoretical exercise - it needs to be embedded into daily workflows [12].

"Real governance now lives in DevOps, procurement, vendor management and runtime controls, not policy binders." – The Compliance Digest [13]

Connecting Isolated Data Sources

One of the biggest headaches for organisations is dealing with scattered data. It's often spread across departments, various cloud platforms, and outdated legacy systems. Centralising all this data might seem like a good idea, but it comes with its own set of risks - especially when it involves sensitive information like customer details or health records.

This is where federated data architectures come in. Instead of moving data to a single location, the AI model itself travels to where the data resides. Take a hospital, for example: it can train a diagnostic AI model on patient records without those records ever leaving its secure environment. This approach not only keeps data private but also allows collaboration across multiple sites.

To make this work, it's essential to track every data transaction. Technical teams need to document where personal data is stored, how it moves between systems, and who has access to it at every stage. This creates an audit trail that satisfies UK GDPR requirements and helps identify vulnerabilities before they turn into full-blown breaches [1].

Another tool in the governance arsenal is policy-as-code, which bakes governance directly into deployment pipelines. For instance, a rule like "data from EU citizens cannot leave the EU region" can be enforced automatically every time new code is deployed. This ensures that regional data restrictions are consistently applied across all systems and environments [12].

Once secure data connections are established, the next step is to integrate governance checkpoints into your MLOps pipeline.

Adding Governance Checkpoints to MLOps

MLOps pipelines - used to build, test, and deploy AI models - are the frontline for governance. Waiting until a model is live to check for issues like bias or compliance gaps is far too late.

To address this, automated data lineage tools can map the entire journey from raw data to the AI's final output. If a model produces a biased or incorrect result, these tools allow you to trace the issue back to its source in minutes rather than days. This level of transparency not only meets regulatory requirements but also strengthens overall compliance and security.

Dual-key governance is another layer of protection. This method requires both a commercial owner and a designated risk executive to sign off before a model reaches production. By combining technical and regulatory oversight, this approach ensures that models aren't rushed to market without proper checks [13].

For continuous oversight, integrate automated bias detection and compliance checks into your MLOps pipeline. These tools keep an eye out for "concept drift", which occurs when a model's performance declines as real-world data changes. Regular Data Protection Impact Assessments (DPIAs) should complement this technical monitoring to catch issues early [2].

And don't forget about runtime controls and kill-switches. These allow for the immediate withdrawal of a model if it shows signs of bias, security flaws, or compliance breaches. It's not just about reacting to problems - it's about being prepared to act quickly and decisively when the need arises [13].

The days of manual governance are fading fast. With 74% of organisations finding traditional governance methods inadequate for today's AI workloads, the companies that succeed will be the ones treating governance as a technical discipline rather than just a legal requirement [12].

Metamindz: Supporting AI Data Governance

When it comes to AI governance, having the right technical leadership is non-negotiable. It’s not just about understanding the code - it’s about navigating compliance too. By embedding governance into AI workflows, Metamindz ensures policies translate into real-world security practices. These services directly address the earlier-mentioned concerns around data security, regulations, and quality.

Fractional CTO Services for Governance and Compliance

Metamindz’s fractional CTO service bridges the gap between data science and compliance. As the Information Commissioner’s Office rightly points out, “The data protection implications of AI are heavily dependent on the specific use cases... You cannot delegate these issues to data scientists or engineering teams.” [2]. This means organisations need leadership that understands both the technical and regulatory sides of AI.

Here’s a real-world example: In February 2026, a SaaS company offering cloud-based HR and payroll systems implemented a robust AI governance framework. They formed an AI Review Committee (including Legal, Privacy, InfoSec, and HR teams) and an executive AI Board for final approvals. By introducing an automated AI impact assessment template in ServiceNow, they gave multiple teams the ability to monitor compliance in real time. This not only streamlined their processes but also made contract negotiations smoother by providing clearer AI-related disclosures to corporate clients [7].

With the Data (Use and Access) Act 2025 now in force, fractional CTOs play a critical role in helping organisations meet mandatory safeguards for Automated Decision-Making. This includes designing systems that allow individuals to challenge automated decisions and ensuring meaningful human oversight is built in from the start [10].

In addition to these strategic services, Metamindz also offers technical due diligence to tackle security issues head-on.

Technical Due Diligence for AI Security

Metamindz’s technical due diligence digs deep into code quality, infrastructure, team dynamics, processes, and security practices. But it’s not just about ticking boxes - this service provides an evidence-based review, complete with screenshots of the codebase and infrastructure configurations [14].

Why is this important? The risks are very real. Research into model inversion attacks has shown that face images can be reconstructed from training data with a 95% accuracy rate [1]. Similarly, attack success rates for bypassing safety guardrails are alarmingly high - 84% for GPT-3.5 and 48% for GPT-4 [15]. These figures highlight just how crucial rigorous due diligence is.

Metamindz doesn’t stop at identifying vulnerabilities. They provide hands-on support, offering regular consultations to help teams implement the necessary changes. If a company’s internal capacity is stretched thin, Metamindz can even deploy their own developers to handle the required security and compliance updates [14].

For founders gearing up for investment or acquisition, this service acts as a pre-emptive health check, addressing security or scalability issues before they’re flagged by investors or acquirers. For investors, it’s a way to get a clear picture of a company’s “tech health,” complete with actionable recommendations to mitigate risks [14].

With regulations like the EU AI Act threatening fines of up to €35 million or 7% of global revenue for non-compliance [15], the cost of Metamindz’s services (£2,750 per month for fractional CTO services and £3,750 for technical due diligence) is a small price to pay to avoid massive penalties or reputational damage.

Conclusion

AI data governance isn’t just about ticking compliance boxes - it’s about building trust. As the Information Commissioner’s Office aptly states, "The deployment of an AI system to process personal data needs to be driven by evidence that there is a problem, and a reasoned argument that AI is a sensible solution to that problem, not by the mere availability of the technology" [2]. This perspective is especially relevant as 90% of organisations are now expanding their privacy programmes specifically to safeguard data in today’s AI-driven world [8].

Of course, challenges continue to evolve. AI brings unique risks, such as exposing sensitive data or facing "concept drift", where a model’s effectiveness declines due to shifting behaviours and demographics [2]. To make matters more complex, some machine learning frameworks can contain up to 887,000 lines of code and depend on 137 external libraries, creating a massive attack surface [1].

But these challenges aren’t insurmountable. Practical governance steps, like conducting Data Protection Impact Assessments early on, ensuring meaningful human oversight, and treating governance as an ongoing process, can significantly reduce risks. With hefty fines tied to new regulations, organisations simply can’t afford to cut corners.

The most successful companies see security and compliance as the bedrock for scaling AI responsibly. With 93% of enterprises planning to increase investment in privacy and data governance over the next two years [8], it’s clear that trust, compliance, and the long-term viability of AI systems depend on getting this right.

FAQs

How do I know if our AI use is 'high risk' under UK and EU rules?

AI is classified as 'high risk' under UK and EU regulations when it makes automated decisions that have a major effect on an individual's rights or freedoms. This includes areas like employment, credit approvals, or law enforcement. To comply, organisations must carry out specific evaluations, such as Data Protection Impact Assessments (DPIAs). Since the Data (Use and Access) Act 2025 came into force, the Information Commissioner's Office (ICO) has been keeping a close eye on how AI is used under UK GDPR.

What’s the quickest way to reduce model inversion and data leakage risk?

The fastest route to cutting down the risk of model inversion and data leakage is by adopting data minimisation techniques. This means processing only the personal data that’s absolutely necessary, using encryption and anonymisation to protect it, and carrying out regular risk assessments, such as Data Protection Impact Assessments (DPIAs). These measures go a long way in protecting sensitive information while keeping in line with data protection regulations.

How can we include bias checks and audit trails in our MLOps pipeline?

To build bias checks and audit trails into your MLOps pipeline, you’ll want to set up a logging system that keeps a record of all lifecycle events, decisions, and governance actions. Using tamper-evident logs is key here - they allow you to track things like where your data came from, any changes made to your models, and who approved what. This not only boosts transparency but also helps spot bias and ensures you’re ticking the compliance boxes.

A practical way to achieve this is by using a framework that combines lightweight emitters (to log events efficiently) with append-only storage (to prevent tampering). This setup ensures you can trace every step of the model’s journey while holding everyone accountable.