80% of Enterprise AI Projects Fail: 5 Myths Your Leadership Team Still Believes

Enterprise AI project failure isn't a technology problem. It's an organisational one. RAND Corporation's 2025 analysis puts the failure rate at 80.3% - and that number keeps getting repeated in boardrooms like it's a weather forecast nobody can do anything about. But I've been doing technical due diligence and fractional CTO work with startups and enterprises for years, and what I keep seeing is that most of these failures are completely avoidable. The problem is that leadership teams are making decisions based on myths that sound reasonable but are dead wrong.

Here are the five biggest ones I see over and over again - and what actually works instead.

Myth 1: "Our Pilot Was Successful, So We're Ready to Scale"

This is the most dangerous myth in enterprise AI right now. A successful pilot and a production-ready system have almost nothing in common.

MIT Sloan's 2025 research found that 95% of GenAI pilots fail to scale to production. A March 2026 survey of 650 enterprise technology leaders put it differently: 78% of enterprises have AI pilots running, but only 14% have successfully scaled even one agent to organisation-wide operational use.

Why the gap? Pilots deliberately simplify everything. They use clean, curated data. They run on a single use case with a friendly user group. They bypass the gnarly integration work with legacy systems. They don't need monitoring, governance, or rollback plans because nothing is mission-critical yet.

Then someone says "let's roll this out" and suddenly you need to handle messy production data, integrate with six different systems that were built in 2014, build observability tooling, get compliance sign-off, and train 200 people who didn't volunteer to be early adopters.

What actually works: Treat every pilot with a "production readiness checklist" from day one. Before you celebrate the demo, ask: What happens when the data is dirty? What happens at 100x the volume? Who owns this in production? What's the rollback plan? If you can't answer those questions, your pilot isn't successful - it's just a demo that went well.

At Metamindz, when we do fractional CTO work with companies adopting AI, the first thing we ask is: "What does production look like for this?" Not "does the model work?" - that's the easy part.

Myth 2: "We Just Need a Better Model"

I hear this constantly. The AI project isn't delivering results, so the team's instinct is to swap the model. Try GPT-4o instead of GPT-4. Fine-tune something. Throw more compute at it.

The data says otherwise. Gartner's April 2026 research found that only 28% of AI infrastructure projects fully pay off - and 57% of I&O leaders who reported failures said the projects failed because they expected too much, too fast. Not because the model was wrong.

McKinsey found that 60% of AI projects fail not because of model performance, but because of workflow misalignment and governance gaps. And RAND's analysis showed that approximately 70% of AI deployment failures are structural, not model-related.

So look, the model is almost never the bottleneck. The bottleneck is:

- Data quality. Gartner predicts that through 2026, organisations will abandon 60% of AI projects unsupported by AI-ready data.

- Integration. Infrastructure limitations account for 64% of AI scaling failures, with cost overruns averaging 380% at production scale vs pilot projections.

- Ownership. When data scientists own the experiment but no business leader owns the outcome, the project sits in purgatory forever.

What actually works: Before touching the model, audit the data pipeline, the integration points, and the ownership structure. Nine times out of ten, fixing those three things will deliver more improvement than any model swap.

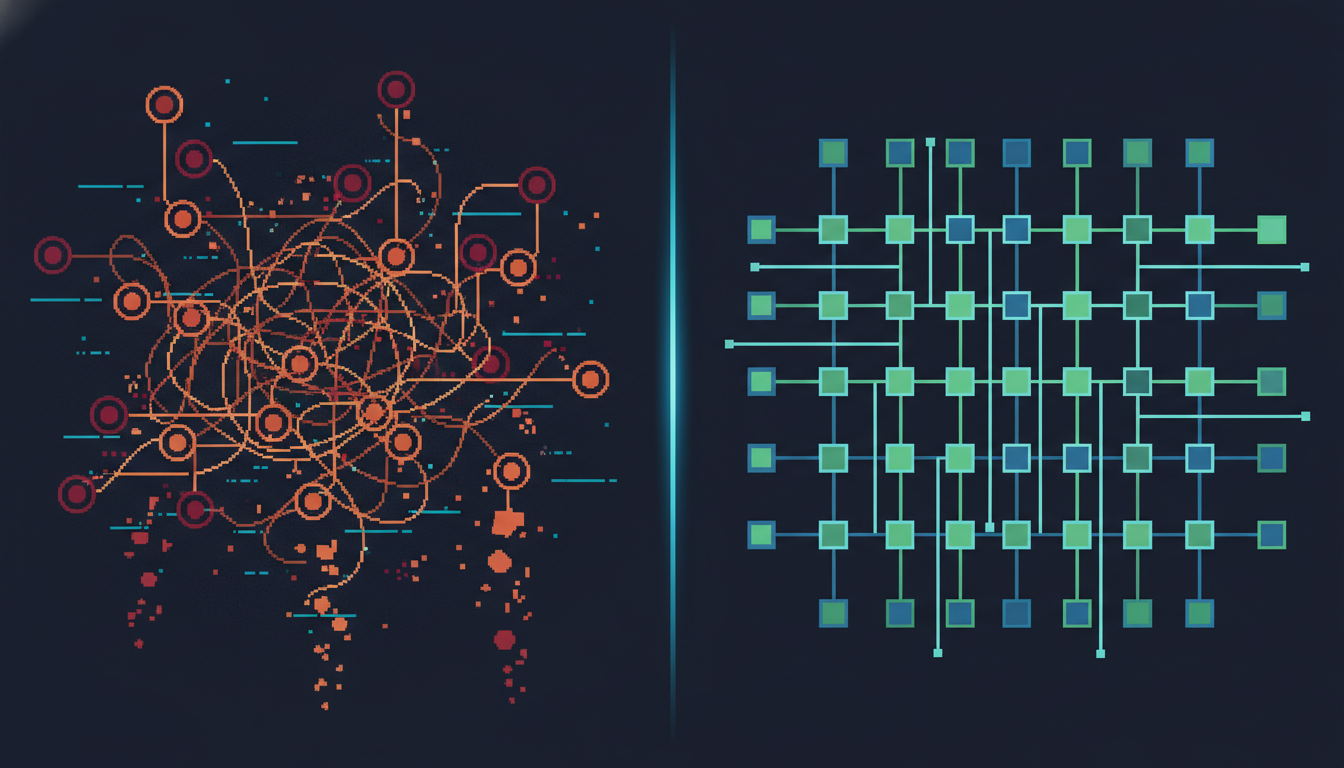

Myth 3: "More Use Cases = More Value"

This one kills me. A leadership team sees AI potential everywhere (fair enough - there IS potential everywhere), and decides the strategy is to launch 15 pilots across 8 departments simultaneously. Spray and pray.

The data from successful deployments shows the opposite pattern. Organisations that actually scaled AI to production started with agents scoped to a single, well-defined task with measurable outputs. Scope expansion happened only AFTER the narrow version proved stable for 90+ days.

Stanford's Enterprise AI Playbook, based on 51 successful deployments, found that sector-specific, narrow agents delivered 500% ROI on average compared to horizontal AI deployments. Five hundred percent. That's not a marginal difference.

| Approach | Typical Enterprise AI Strategy | CTO-Led Approach (What Actually Works) |

|---|---|---|

| Scope | 15 pilots across 8 departments | 1-2 high-impact use cases, deeply executed |

| Timeline to value | 12-18 months, most stall | 90 days to production, then expand |

| Ownership | Innovation team or data science team | Business owner + technical lead paired |

| Success metric | "We have 15 AI pilots running" | "This one agent saves £200K/year in production" |

| Data readiness | Assumed, rarely validated | Audited before pilot begins |

| Integration plan | Figured out later | Designed from day one with production constraints |

| Monitoring | Manual checks, maybe a dashboard | Automated observability, drift detection, alerting |

| Average ROI | 42% show zero ROI | 500% for domain-specific agents |

What actually works: Pick one use case. The one with the clearest data pipeline, the most measurable outcome, and a business owner who actually cares about the result. Nail that one. Get it to production. THEN expand. This is exactly what we advise in our AI adoption engagements - structured, sequential, with human oversight at every stage.

Myth 4: "AI Projects Fail Because of Technology Limitations"

I've done dozens of technical due diligence reviews where the company blames the AI model or the tooling for a failed project. And almost every time, the real failure was organisational.

KPMG's 2026 research into why enterprise AI stalls after pilot success identified five gaps that account for 89% of scaling failures:

- Integration complexity with legacy systems - not an AI problem, an architecture problem

- Inconsistent output quality at volume - not a model problem, a data and testing problem

- Absence of monitoring tooling - not a technology gap, a planning gap

- Unclear organisational ownership - not a technology issue at all

- Insufficient domain training data - a data problem, not an AI problem

Notice the pattern? Four out of five are organisational or operational issues. The technology works fine. The organisation around it doesn't.

Writer's 2026 enterprise AI adoption report found that 79% of enterprises face challenges despite high AI investment - and only 39% of technology leaders believe their current AI efforts will improve financial performance. That's a confidence crisis, not a technology crisis.

What actually works: Before launching any AI project, answer these questions:

- Who owns this in production? (Name a person, not a team.)

- What existing systems does this need to integrate with?

- What happens when the model is wrong? (And it WILL be wrong sometimes.)

- Who is responsible for monitoring and maintaining this after launch?

- What does success look like in numbers, not vibes?

If you can't answer those clearly, you're not ready to start building. You're ready to start planning. This is exactly the kind of work a fractional CTO does - bridging the gap between what the technology can do and what the organisation is actually ready for.

Myth 5: "We Can Figure Out Governance Later"

So.. governance is the thing everyone knows they need and nobody wants to do first. It's the broccoli of AI deployment.

But the data is unambiguous. In regulated industries - financial services, healthcare, insurance - over 70% of AI pilots are never operationalised specifically because governance and compliance weren't addressed early enough. By the time the project is ready for production, legal and compliance teams flag issues that require fundamental redesigns.

Gartner has predicted that 40% of agentic AI projects will fail partly because governance frameworks aren't keeping pace with capability expansion. And Deloitte's State of AI in the Enterprise report shows that organisations which scale successfully align governance, finance, data management, and talent structures BEFORE expanding scope - not after.

What actually works: Build governance into the pilot design. Not as a separate workstream. Not as a phase 2 concern. As a core requirement from sprint one. This includes:

- Data governance: Where does the training data come from? Is it compliant? Is it biased?

- Output governance: How do you validate model outputs? What's the human-in-the-loop process?

- Operational governance: Who monitors model drift? What triggers a rollback?

- Compliance governance: Does this meet GDPR, EU AI Act, ISO 42001 requirements?

At Metamindz, our AI adoption work always starts with governance design alongside technical architecture. Not because we enjoy paperwork (nobody does), but because we've seen too many projects die three months before launch because someone finally asked the compliance team.

The Real Pattern Behind the 20% That Succeed

The organisations that actually get AI into production and keep it there share a few common traits. Stanford's Enterprise AI Playbook studied 51 successful deployments and found three consistent accelerators:

- Executive sponsorship with technical understanding. Not just a VP who approved the budget - someone who understands what "production-ready" means and holds the team to it.

- Existing technical foundations. Good data infrastructure, CI/CD pipelines, monitoring. AI doesn't replace the basics - it amplifies them.

- End-user willingness and involvement. The people who'll actually use the system were involved in designing it. Not consulted after the fact.

High performers redesign workflows around AI - 55% of high performers did this vs only 20% of other companies. They don't bolt AI onto existing processes. They rethink the process with AI capabilities in mind.

And perhaps most importantly: they created dedicated AI operations functions. Not a data science team that also handles production. Not the IT team that "figures it out." A specific function whose job is to move AI from pilot to production and keep it running.

What This Means for Startups and Scaleups

You might be thinking "this is all enterprise stuff, doesn't apply to me." It does - just at a different scale.

If you're a seed-stage startup building with AI, the same myths apply. Your MVP worked in demo? Great - but what happens with real users and messy data? You're using three different AI models across five features? Pick one, nail it, then expand. You haven't thought about what happens when the model hallucinates in production? That's a governance gap, not a feature.

The advantage startups have is speed. You can build governance in from the start because you don't have legacy systems fighting you. You can pick one use case because you don't have eight department heads demanding their own AI project. You can pair a business owner with a technical lead because in a startup, they might be the same person.

If you're raising a Series A and your AI features don't have production monitoring, data quality checks, or a clear ownership model - that WILL come up in technical due diligence. Investors have seen the 80% failure stat too. They want evidence you're in the 20%.

Frequently Asked Questions

Why do most enterprise AI projects fail?

Roughly 80% of enterprise AI projects fail to deliver intended business value, according to RAND Corporation's analysis. The primary causes are organisational, not technological: unclear ownership, poor data quality, integration complexity with legacy systems, and governance gaps. Only about 30% of failures are model-related - the rest are structural and operational issues that exist before AI enters the picture.

What percentage of AI pilots actually reach production?

Only 14% of enterprises have successfully scaled an AI agent to organisation-wide operational use, according to a March 2026 survey of 650 technology leaders. MIT Sloan found that 95% of GenAI pilots specifically fail to scale. The pilot-to-production gap is driven by infrastructure costs running 3-5x initial projections and the deliberate simplifications that make pilots succeed but production deployments fail.

How can companies improve their AI project success rate?

Start narrow with one well-defined use case and measurable outcomes. Build governance and production readiness requirements into the pilot from day one. Assign clear ownership - a named business owner paired with a technical lead. Audit data quality before building anything. And create a dedicated AI operations function rather than expecting existing teams to handle production AI alongside their regular work.

How much money is wasted on failed AI projects?

In 2025, global enterprises invested approximately $684 billion in AI initiatives. Over $547 billion of that - more than 80% - failed to deliver intended business value, according to aggregated industry research. The cost isn't just the direct investment: failed projects burn team morale, erode executive confidence in AI, and create organisational resistance to future AI adoption attempts.

What role does a fractional CTO play in AI project success?

A fractional CTO bridges the gap between AI capability and organisational readiness. They assess data infrastructure, design production-ready architectures, build governance frameworks, and pair technical decisions with business outcomes. For startups and scaleups that can't justify a full-time CTO, a fractional CTO brings the technical leadership needed to avoid the structural failures that kill 80% of AI projects - without the full-time cost.