The Modular Monolith Renaissance: Why Shopify, Amazon, and Stack Overflow Bet Against Microservices

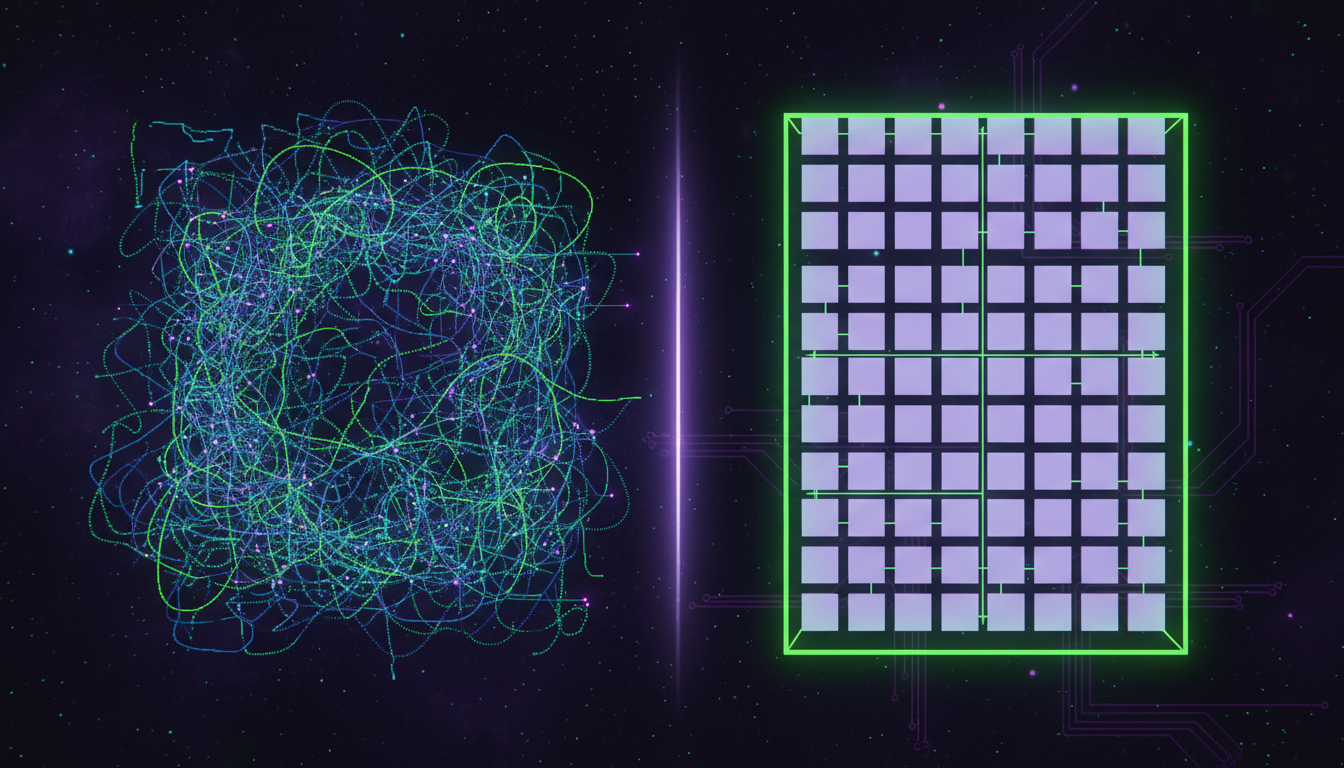

A modular monolith is a single deployable application with explicitly enforced internal boundaries between domains - giving you the simplicity of one deployment unit with the organisational clarity of well-separated modules. In 2026, it's quietly becoming the default architecture choice for teams that have been burnt by premature microservices adoption.

The Microservices Regression Is Real - and the Numbers Prove It

I've spent the last 18 months doing technical due diligence on startups, and I keep seeing the same pattern. A 12-person team running 40+ microservices, three different message queues, a service mesh nobody fully understands, and a cloud bill that makes the founders physically wince.

The data backs up what I'm seeing on the ground. According to the CNCF Q1 2026 report, 42% of organisations that initially adopted microservices have consolidated at least some services back into larger deployable units. That's not a blip - that's nearly half the industry admitting they went too far, too fast.

Service mesh adoption - the thing that was supposed to solve the complexity microservices created - plummeted from 18% in Q3 2023 to 8% in Q3 2025. Teams aren't just questioning microservices. They're actively dismantling the tooling that propped them up.

Shopify, Amazon, and Stack Overflow - What They Actually Did

Shopify: 30TB per Minute on a Monolith

Shopify's codebase has roughly 2 million classes, over 4,000 components, and hundreds of concurrent contributors. During Black Friday Cyber Monday 2025, it handled a peak of 30TB per minute of data throughput. All on a modular monolith.

They didn't just leave their monolith as-is and hope for the best. They built a proprietary static analysis tool called Packwerk that enforces explicit domain boundaries via package.yml files. Every module has clear ownership, defined interfaces, and automated enforcement of those boundaries. The result? Internal measurements from April 2026 show that new-developer onboarding time fell by 55%, and cross-module regressions dropped by 68% year-over-year.

That's the bit most people miss about modular monoliths. It's not about staying simple because you're small. It's about staying simple BECAUSE you're scaling.

Amazon Prime Video: 90% Cost Cut

In 2023, Amazon's Prime Video team published a case study that made the entire industry uncomfortable. Their Video Quality Analysis service - built on AWS Step Functions with a fully distributed serverless architecture - hit a hard scaling limit at just 5% of expected load. The inter-service communication costs were astronomical, with expensive Tier-1 calls to S3 for intermediate video frame storage between each microservice.

They rebuilt it as a monolith. Data transfer that previously went through S3 now happens in memory. The result: 90% infrastructure cost reduction. Not 10%. Not 30%. Ninety percent.

Stack Overflow: 9 Servers for the Entire Platform

Stack Overflow serves millions of developers daily on just 9 web servers. The entire platform runs as a monolith, with Redis for caching and Elasticsearch for search bolted on the side. No Kubernetes. No service mesh. No 47-service dependency chain to render a search result.

They got there through careful performance tuning, efficient database queries, and aggressive caching - not by throwing architectural complexity at the problem.

Why Microservices Went Wrong for Most Teams

Microservices were the right answer - for about 5% of the teams that adopted them. The other 95% adopted them because a conference talk or a blog post from Netflix made them feel like they should.

The core problem is that microservices trade code complexity for operational complexity. You get cleaner service boundaries, but you also get distributed tracing, eventual consistency, network partitions, deployment coordination, contract testing, and a cloud bill that grows faster than your revenue.

Some numbers that spell this out:

| Cost Factor | Microservices Reality | Modular Monolith Reality |

|---|---|---|

| Cloud waste | 30% average waste on unused/misconfigured resources | Single deployment = fewer idle resources |

| Inter-service traffic | Thousands of $/month in inter-AZ network costs | In-memory function calls, zero network overhead |

| Kubernetes overhead | 53% of K8s users say resource optimisation is their top challenge | Often no K8s needed at all |

| Team coordination | Distributed ownership, contract negotiations between teams | Shared codebase, enforced boundaries (e.g. Packwerk, ArchUnit) |

| Developer onboarding | Weeks to understand service dependencies and deployment pipelines | Hours to run locally, days to contribute (Shopify saw 55% faster onboarding) |

| Debugging | Distributed tracing across N services with eventual consistency | Stack traces, breakpoints, standard debugging tools |

With global cloud spending hitting $1.3 trillion in 2025 and 67% of enterprises running multi-cloud, the operational tax of microservices is no longer something teams can ignore. Especially startups burning through runway.

When a Modular Monolith Actually Makes Sense

I'm not saying microservices are dead. I'm saying they're a scaling tool, not a starting architecture. The distinction matters enormously for early-stage and growth-stage companies.

A modular monolith makes sense when:

| Scenario | Why Modular Monolith Wins |

|---|---|

| Team of 3-30 engineers | Not enough people to own 15+ services properly. Shared ownership in a monolith is simpler. |

| Pre-Series B startup | You need speed and the ability to pivot. Refactoring module boundaries is hours. Refactoring service boundaries is weeks. |

| Tightly coupled domain | If your features share data and logic heavily, microservices just create distributed coupling - the worst kind. |

| Limited DevOps capacity | No dedicated platform team? You can't afford the K8s/Docker/CI-CD overhead of microservices. |

| Preparing for tech DD | Investors and acquirers care about code quality, not architecture fashions. A well-structured monolith passes DD faster than a messy microservices sprawl. |

Microservices start making genuine sense when you have 50+ engineers, genuinely independent deployment needs (different scaling profiles, different release cadences), and a platform team to manage the infrastructure.

How to Build a Modular Monolith That Doesn't Become a Big Ball of Mud

The fear with monoliths is always the same: "It'll become an unmaintainable mess." And that's a fair concern - if you don't enforce boundaries. The whole point of a MODULAR monolith is that the boundaries exist, they're just enforced at the code level rather than the network level.

Here's what I recommend to the startups I work with as a fractional CTO:

1. Define domain modules from day one. Use Domain-Driven Design to identify your bounded contexts. Each module gets its own directory, its own models, its own public API. Internal classes are genuinely internal - not accidentally imported by other modules.

2. Enforce boundaries with tooling. Shopify uses Packwerk. Java teams use ArchUnit. .NET teams use Vertical Slice Architecture with strict namespace rules. The tool doesn't matter - the enforcement does. If boundaries aren't automated, they'll erode within 3 sprints.

3. Keep your database schema modular too. Each module should own its tables. Cross-module data access goes through defined interfaces, not direct SQL joins. This is the bit most teams skip, and it's exactly what makes extraction to a separate service possible later if you genuinely need it.

4. Design for extractability, not extraction. Structure your code so that a module COULD become a service, but don't make it one until you have a concrete reason. "It might need to scale independently" is not a concrete reason. "This module processes 10x more requests than everything else and has different SLA requirements" is.

5. Test module boundaries, not just functions. Integration tests should verify that modules interact correctly through their public interfaces. If a test reaches into another module's internals, your boundaries are already broken.

What This Means If You're Building a Startup Right Now

If you're a founder or early-stage CTO reading this, the practical takeaway is straightforward: start with a modular monolith. Not because it's trendy (though, ironically, it now is), but because it gives you the fastest path from idea to working product while keeping your architecture clean enough to survive technical due diligence and scale with your team.

At Metamindz, this is exactly what we advise on our fractional CTO engagements and software development projects. We've seen too many seed-stage startups blow 6 months and £100K+ on microservices infrastructure they didn't need. The smart ones start simple, enforce boundaries, and extract services only when the data says they should.

| Approach | Typical Agency / Consultancy | CTO-Led Approach (Metamindz) |

|---|---|---|

| Architecture decision | "Let's use microservices - it's industry standard" | "Let's look at your team size, domain, and runway before choosing" |

| Boundary enforcement | Convention-based, erodes over time | Automated tooling (Packwerk, ArchUnit, or custom linting rules) |

| Scaling strategy | Over-engineer upfront "just in case" | Design for extractability, extract when data justifies it |

| Tech DD readiness | Rarely considered during initial build | Architecture designed to pass investor scrutiny from day one |

Frequently Asked Questions

What is a modular monolith?

A modular monolith is a single deployable application where code is organised into well-defined, loosely coupled modules with enforced boundaries between them. Unlike a traditional monolith, each module has clear ownership, defined interfaces, and automated boundary enforcement using tools like Packwerk or ArchUnit. It combines deployment simplicity with architectural discipline.

Is the modular monolith replacing microservices?

Not entirely, but there's a significant correction happening. The CNCF reports that 42% of organisations are consolidating microservices back into larger units. For teams under 50 engineers or companies pre-Series B, a modular monolith is increasingly the pragmatic default. Microservices remain valid for large organisations with genuine independent scaling needs.

Can a modular monolith scale to handle high traffic?

Absolutely. Shopify handles 30TB per minute during Black Friday on a modular monolith. Stack Overflow serves millions of daily users on 9 web servers. Scaling a monolith means vertical scaling plus horizontal replication of the entire application - which is simpler and cheaper than managing dozens of independently scaled services for most workloads.

When should a startup switch from a monolith to microservices?

When you have concrete evidence that specific modules need independent scaling, different release cadences, or genuinely separate team ownership - AND you have the platform engineering capacity to manage the operational overhead. For most startups, that's post-Series B with 50+ engineers. Switching earlier usually creates more problems than it solves.

How does architecture choice affect technical due diligence?

Investors and acquirers care about code quality, test coverage, documentation, and maintainability - not whether you're using microservices. A well-structured modular monolith with enforced boundaries, clear module ownership, and good test coverage will pass technical due diligence more smoothly than a tangled microservices architecture with unclear service boundaries and poor observability.